LLMs are ghosts, Two types of tools, AI in organizational chaos

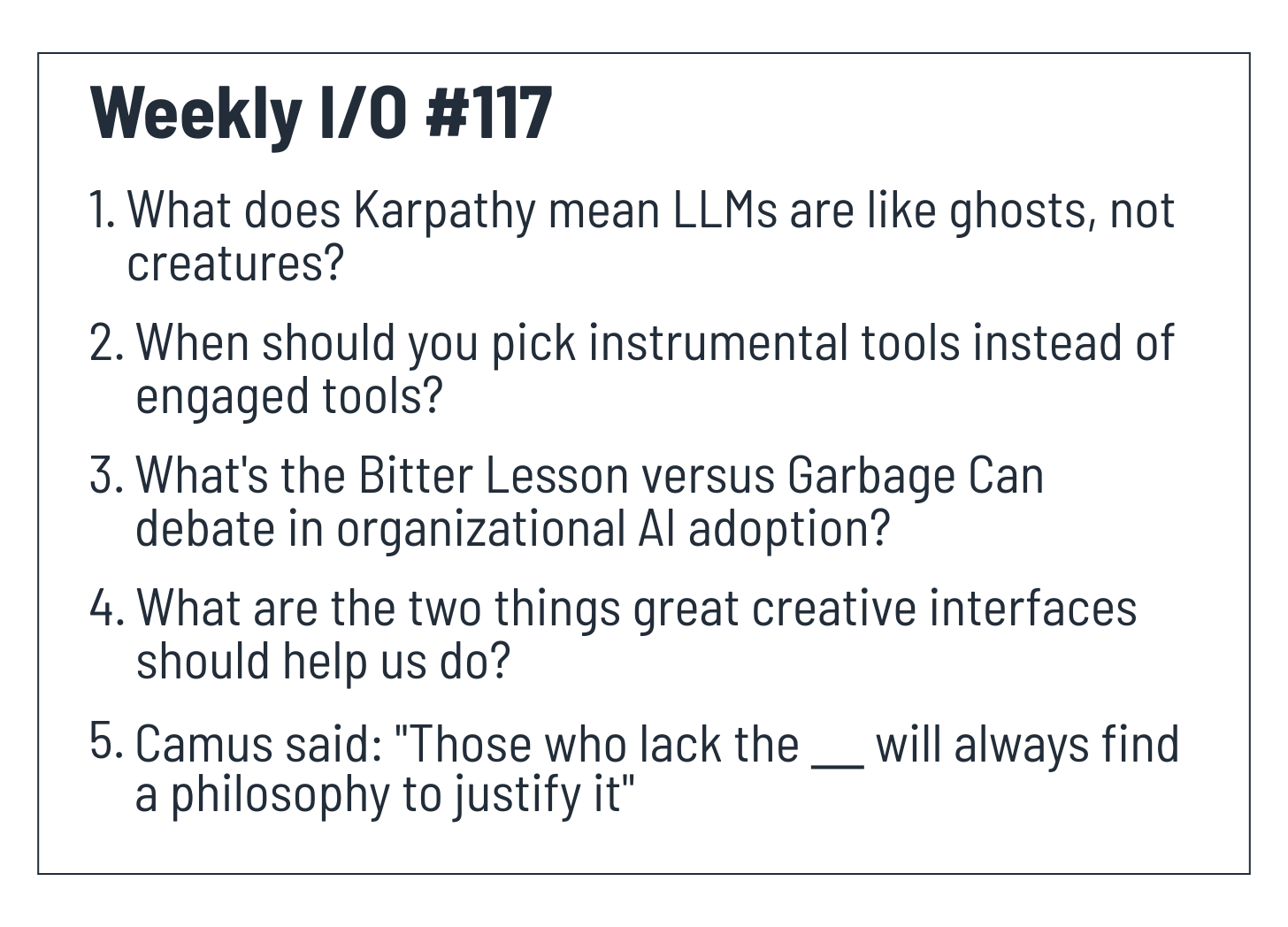

Weekly I/O #117: LLMs are Ghosts not Creatures, Instrumental and Engaged Tools, Bitter Lesson or Garbage Can, Interfaces Help See and Express, Justify the Lack of Courage

Hey friends,

Here’s your weekly dose of inputs and outputs. Happy learning!

Help you absorb better with Forward Testing Effects

Input

Here’s a list of what I learned this week.

1. LLMs are like ghosts, not creatures. They’re digital entities trained by imitating human data on the internet, not through evolution. This difference shapes what they can and can’t do.

Podcast: Andrej Karpathy — AGI is still a decade away

What kind of intelligence are we creating with large language models?

LLMs are digital entities. They’re trained by imitating human data on the internet. Karpathy calls this “crappy evolution,” but it’s the practically possible version of creating intelligence with our current technology.

Animals evolved differently. Evolution baked capabilities into their hardware over millions of years. A zebra runs minutes after birth. That ability is built-in, not learned. (I believe the same for curiosity too.)

But we’re not running that evolutionary process with AI.

Karpathy is hesitant to draw inspiration from animals because AI doesn’t have the same foundations. Building systems that work like animals would be wonderful (Sutton’s view), but it’s not what animals actually do. Animals rely on evolution in ways we can’t replicate.

Instead, we’re building digital entities that learn by compressing and imitating human artifacts.

This is a fundamentally different form of intelligence. Understanding this difference helps us set realistic expectations for what AI can and cannot do for now.

The “ghost” metaphor also reminds us that LLMs are powerful but divorced from biological mechanisms. They don’t have bodies, don’t navigate physical space, and don’t experience the world the way evolved creatures do.

I think LLM is going through a much slower form of natural selection, where humans exert their preferences on LLM’s character. In this case, the selection pressure comes from how frontier AI labs interpret and predict their users’ preferences. It’s also important to remember that evolutionary success judges everything by the criteria of survival and reproduction, with no regard for individual suffering and happiness.

2. Two types of tools: instrumental tools like magic buttons that do the work for you, and engaged tools like violins that help you master the work yourself. When picking tools, ask if you need results or understanding.

Podcast: Linus Lee - Engineering for Aliveness - Jackson Dahl

Every tool falls somewhere on a spectrum.

On one end: instrumental tools. These are magic buttons like ride-sharing apps, and AI agents. They take your goal and deliver results fast, cheap, and easy. You don’t care how it works. You just want it done. For instance, when you’re rushing somewhere, you use GPS. You don’t care about the route. You want to arrive.

The perfect instrumental tool reads your mind and completes the task instantly.

On the other end: engaged tools. Musical instruments. Coding editors. Physical maps for exploration. These tools pull you into the complexity. They help you see clearly and express precisely. Mastery takes time, but that’s the point. These tools are distributed cognition.

The choice between these tools isn’t about expertise. It’s situational. You want GPS when you’re late. You want a paper map when you’re exploring. Therefore, when picking tools, you should ask yourself:

Do I need results, or do I need understanding?

Pick the wrong tool and you’ll either waste time learning when you should be executing, or lose agency when you should be building mastery.